What are MCP Transports?

March 16, 2026

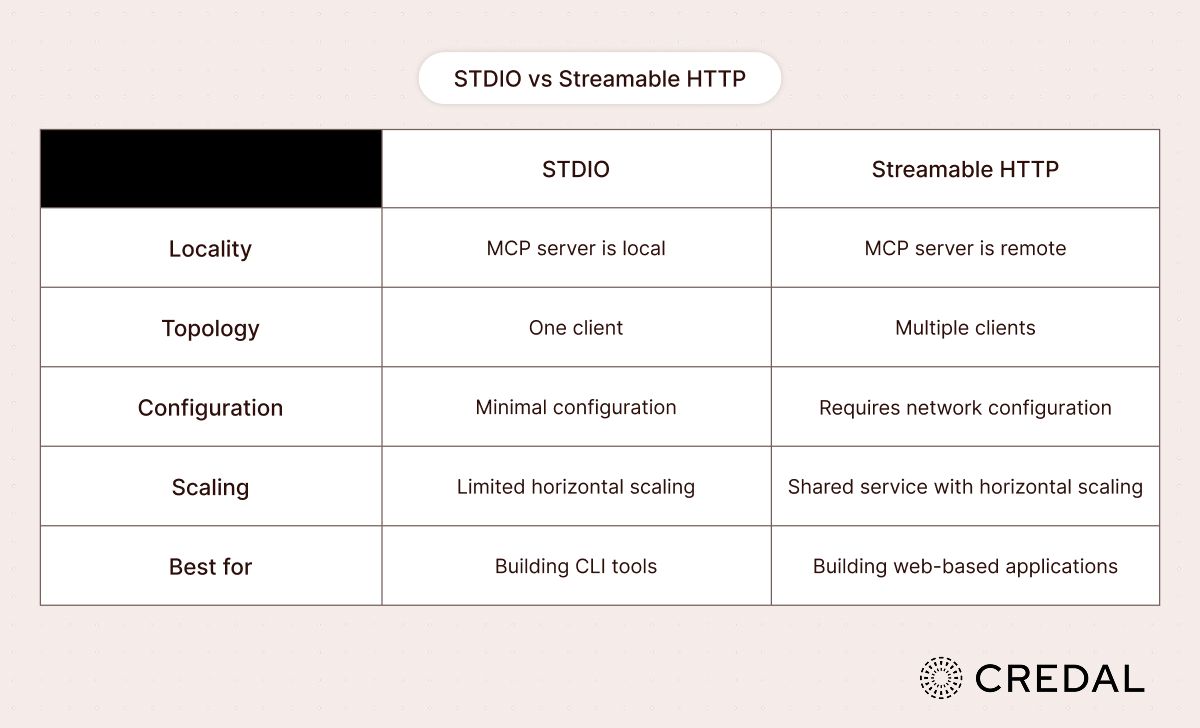

MCP supports two built-in modes of transport: Standard Input/Output (STDIO) and Streamable HTTP. Collectively, these are known as MCP transports. The transports provide optionality to handle any type of MCP transaction, including those that transmit gigabytes of data.

Today, we want to discuss the benefits of each transport option and when developers should consider building a custom transport.

STDIO & Streamable HTTP

At a high-level, there are some main differences between STDIO and Streamable HTTP.

For developers with a networking background, the distinction between STDIO and Streamable HTTP bears a conceptual resemblance to the TCP/HTTP: STDIO maintains a persistent, stateful connection between client and server, analogous to TCP's reliable, ordered byte stream, whereas Streamable HTTP operates in a stateless request-response paradigm more akin to HTTP's connectionless model, trading session continuity for horizontal scalability and infrastructure simplicity.

How is STDIO configured?

On the server side, you create aServerinstance with a name and version, then initialize aStdioServerTransport()and call a connection function to begin listening over standard input/output.

On the client side, the setup mirrors the server: you instantiate aClientwith a name and version, then create aStdioClientTransportthat points to the server binary.

How is Streamable HTTP configured?

On the server side, Streamable HTTP requires an HTTP framework such as Express (Node) or Starlette (Python). You define an/mcpendpoint that handles bothPOSTandGETrequests. APOSTto/mcpreceives a JSON-RPC request body, which you pass toserver.handleRequest().

On the client side, configuration is straightforward. You create aClientinstance and initialize anHttpClientTransportwith the server URL, then call a connect function.

Building a custom transport

If you’re looking to achieve custom functionality through a transport, either for bolstered security or specific error-handling, MCP allows custom transports. There are a few constraints:

- (i) the transport must support client-server messaging via JSON-RPC 2.0

- (ii) it needs to manage connection lifecycles and error handling

- (iii) it needs to be hooked up to a server’s run function

The deciding factors behind using a custom transport versus one of the out-of-the-box options is if your application requires custom network protocols, communication-specific performance optimization, or integration with existing systems or communication channels.

Checklist for setting up a custom transport

As per MCP documentation, there are a few good principles for designing a custom transport that’ll operate without errors or unexpected timeouts:

- Handle connection lifecycle properly

- Implement proper error handling

- Clean up resources on connection close

- Use appropriate timeouts

- Validate messages before sending

- Log transport events for debugging and observability

- Implement reconnection logic when appropriate

- Handle backpressure in message queues

- Monitor connection health

Adherence to these principles produces transport implementations that are straightforward to deploy, instrumentable for debugging, and architecturally resilient against edge cases: qualities that translate directly into reduced operational overhead and lower mean time to resolution when failures occur.

Security for custom transports

When implementing transports, whether through a built-in mode or custom logic, enforcing a security layer is of the utmost importance to protect your data and systems.

There are a few considerations that should be taken to protect a transport system:

- Authentication: Always validate client credentials and pair that with secure token handling (short-lived, rotating tokens).

- Authorization: Enforce RBAC or another form of access management to prevent privilege escalation for users and agents. Alternatively, delegate access to a robust AI orchestration platform such as Credal.ai.

- Input Sanitization & Size Constraints: Ensure all external data is sanitized prior to interacting with any internal systems to protect against prompt injection, tool poisoning, indirect injection, and other common AI security exploits. Implement message size limits and connection pooling limits to prevent resource exhaustion & denial-of-service attacks.

- Data Security: Encrypt all sensitive data (such as credentials, PII, financial records, etc.) and use TLS for all network transport, enforcing mutual TLS (mTLS) where strict client authentication is required.

- Rate Limiting & Traffic Controls: Implement rate limiting, reasonable timeouts, and monitor for unusual patterns to protect against denial-of-service attacks. If a denial-of-service situation were to occur, ensure that your transport mode can respond accordingly by throttling or dropping excess requests to minimize degraded service.

- Network Security: For HTTP-based transports (including Streamable HTTP), validate Origin headers to prevent DNS rebinding attacks. For local servers, bind only to localhost (127.0.0.1) instead of all interfaces (0.0.0.0) to reduce the network attack surface.

Note: HTTP with Server-Sent Events (SSE) constitutes a deprecated MCP transport and has been superseded by Streamable HTTP, which offers equivalent streaming semantics alongside improved statelessness, load-balancer compatibility, and alignment with contemporary HTTP infrastructure standards.