Meta Tools in MCP: Why Are They Important?

February 25, 2026

Model Context Protocol, or MCP, is best understood as a standardization layer to connect third-party services to AI agents. It does this through primitives such astools/listortools/call, which expose operations that an AI agent can perform against an MCP server.

In its early stages, this standardization feels deceptively straightforward. A team can connect an agent to GitHub or an internal database, and the agent’s capabilities seem to expand linearly with each additional MCP server (and its tools). As the system grows, however, this apparent linearity proves to be misleading. With every additional tool comes its own descriptive payloads (i.e., schemas, argument contracts, descriptions). From a developer’s perspective, this pressure shows itself in two primary ways.

First, context efficiency progressively gets worse. Tokens are increasingly used to describe these tool schemas and argument contracts rather than reasoning about the user’s request. Second, more wrong decisions are made. As the agent is asked to choose among a larger set of tools, the probability of choosing the wrong tool also rises accordingly.

Anthropic’s operational experiments show that in multi-server configurations, tool definitions can consume tens of thousands of tokens before a conversation even begins. This is the structural dilemma that surfaces as MCP adoption scales. Agents need enough tool information to operate third-party services correctly, yet they operate within finite context windows and rely on imperfect tool-selection behavior.

As a result, MCP-based systems that continue to expose the entire tool library to agents are implicitly betting that the model can reliably search an ever-expanding ecosystem of tools and MCP servers.

This paper examines meta tools, or more precisely, tools that agents use to navigate, select, and activate other tools. It also raises the security risks that come with this additional meta layer, and why teams must take it seriously.

What are meta tools?

Before moving on, the term “meta tool” requires some additional clarification. MCP specifies what a “tool” is and how an agent invokes it through a small set of protocol primitives (liketools/list). It does not, however, introduce an explicit category called a “meta tool”. The “meta” property is not a feature embedded in MCP, but rather a way to describe what the tool does within the system.

A meta tool is best defined by its purpose: to help agents discover and activate other tools in an otherwise oversaturated library so that an agent can navigate reliably with limited context (or tokens). In small environments, where the tool library is limited, meta tools add little value. They only become relevant as tool libraries expand across multiple MCP servers. Under these conditions, the tool ecosystem should be thought of as an evolving capability layer rather than a fixed set of predefined tools.

Consequently, meta tools appear in multiple forms. Some are implemented directly as MCP tools, exposing the search interface of all available tools. Others operate as an intermediary layer that sits outside the agent’s direct tool surface, routing calls to multiple downstream MCP servers.

Categories of meta tools

Rather than treating all tools as equally visible and callable, meta tools add structure to the MCP system. The following categories show the primary ways that meta tools are used.

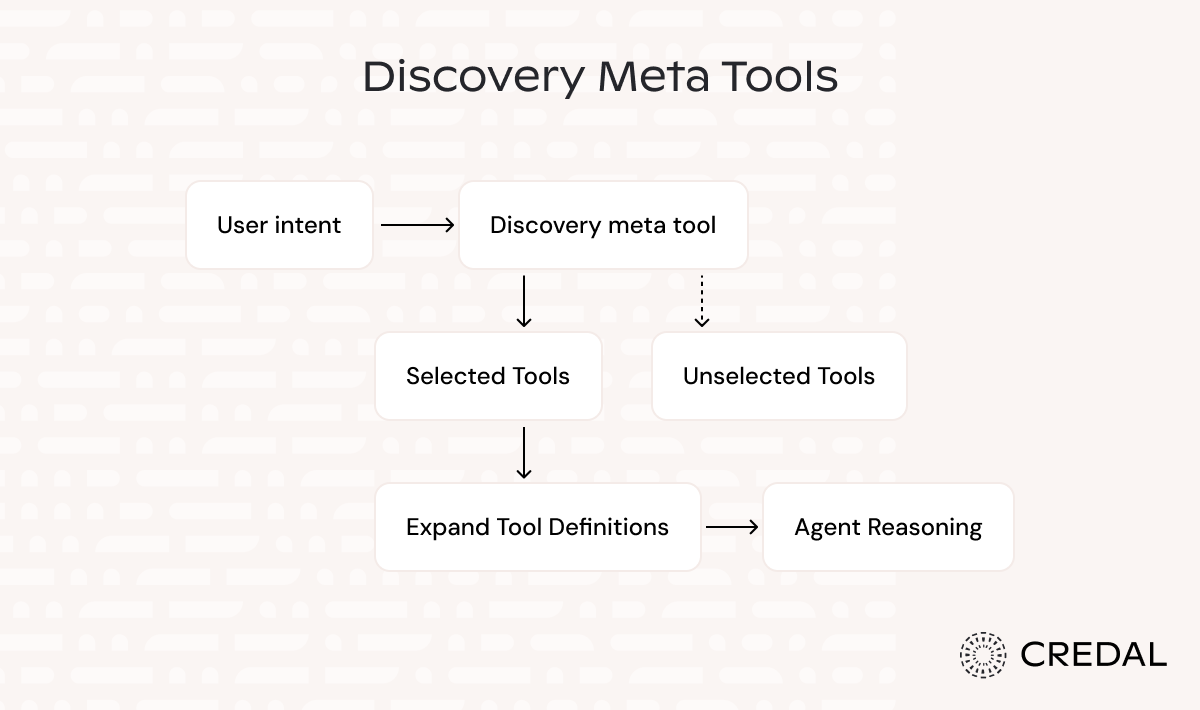

Discovery meta tools

The most common entry point for meta tools is tool discovery. Discovery meta tools introduce an extra retrieval step that returns only a small set of candidates that are relevant to the user’s intent. A great example is Anthropic’s tool search tool.

This is often implemented using lightweight search methods, such as regex matching or BM25, that operate over tool names and schemas. Once the most relevant tools are identified, only those candidates are expanded into full definitions for the agent to reason over.

As a result, discovery meta tools reduce context overhead while also improving tool-selection accuracy since the agent only chooses from a scoped set of tool operations.

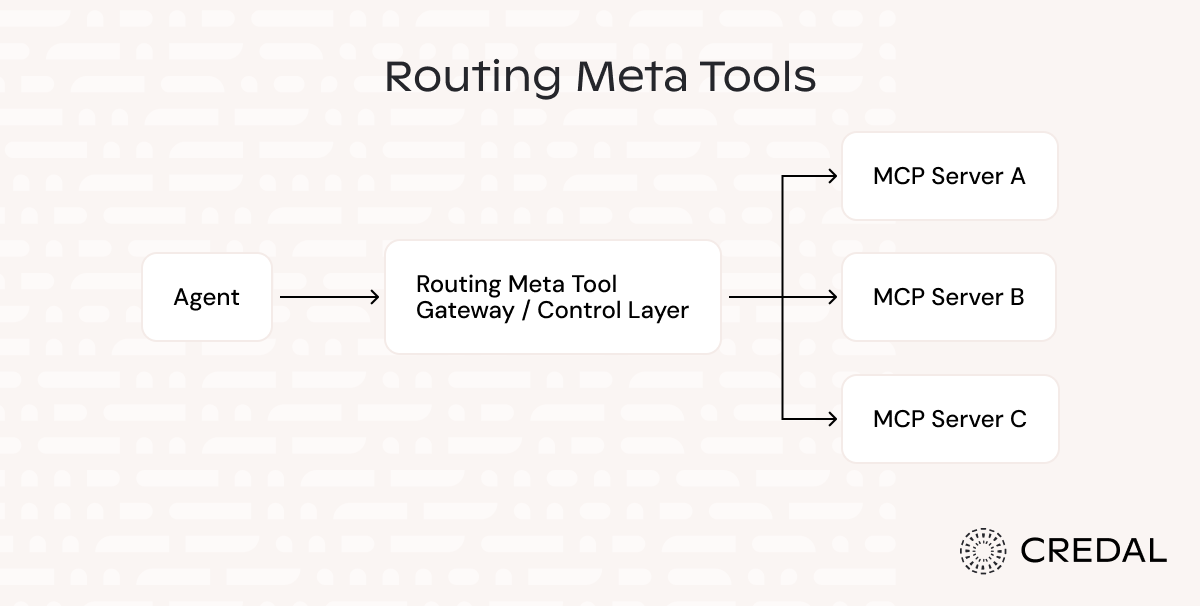

Routing meta tools

Teams rarely operate within a single system. Instead, they work over a variety of environments that each have their own tool libraries, which evolve independently. Routing meta tools are a direct response to this fragmentation. They aggregate multiple MCP servers into one interface so that agents can communicate with them through a single entry point. An example of this is MetaMCP by metatool-ai.

Additionally, it should be noted that MCP itself is a client-server pattern. An agent discovers and invokes tools that are exposed by individual MCP servers. Routing meta tools, on the other hand, tend to be server-to-server. Instead of being directly invoked in the agent’s tool selection, they live as a control layer outside of the agent’s environment.

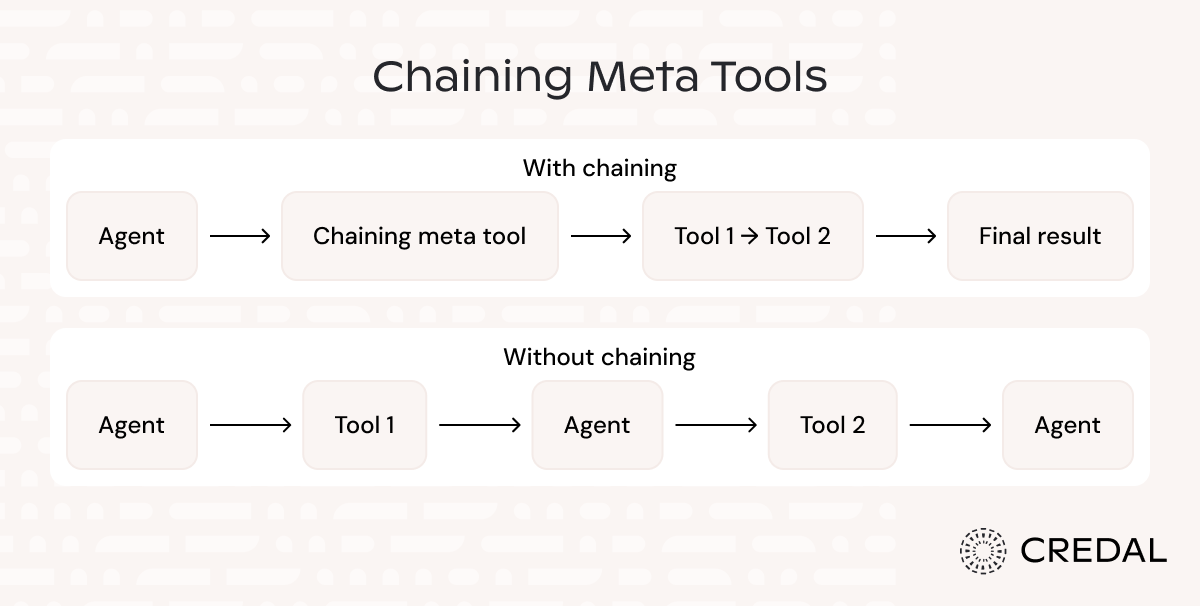

Chaining meta tools

Chaining meta tools acts exactly as they sound: they “chain” or connect tool calls together. Rather than having the agent reason through each step in natural language, chaining meta tools executes multiple operations as a single call. We can see this clearly with Anthropic’s programmatic tool calling.

With chaining meta tools, orchestration logic is moved from the prompts into the code. This approach is valuable since it avoids repeated inference passes between steps. On top of that, it also reduces context pollution that tends to surface with large intermediate results.

Why do meta tools matter?

Meta tools matter because they determine whether agentic systems stay both economically and operationally viable as additional MCP servers are added. This can be seen through two primary dimensions: cost and reliability.

Cost

Tool definitions and schemas consume context regardless of whether they are relevant to the agent’s task. That consumption is directly reflected in the inference costs. Anthropic’s experiments show that in multi-server configurations, trimming the exposed tool set reduced context usage by approximately 85% (from 77k tokens to 8.7k tokens).

So, while adding the additional retrieval step introduced some latency, the net impact was still a reduction in total cost since fewer tokens were processed to identify relevant tools and execute the correct action.

Reliability

Tool selection is not a simple classification problem. It is a decision made under ambiguous conditions, especially when multiple tools expose overlapping (or subtly different) capabilities. By bounding the candidate set, meta tools reduce the surface area in which selection errors can occur, and in turn, also improves the first-pass success rates for decisions made by agents.

Anthropic reports that enabling tool search increased Claude Opus 4.5 accuracy from 79.5% to 88.1%, which shows that with the right logic, systems can limit the number of downstream retries without having to upgrade the model itself.

When should we use meta tools?

It’s important to note that the benefits that come from meta tools do not fit every case. Discovery meta tools give the highest returns when tool catalogs are large and dynamic. Routing meta tools only become valuable when multiple MCP servers expose overlapping capabilities. Chaining meta tools is effective when workflows include multiple dependent steps. Outside of these conditions, meta tools introduce unnecessary complexity.

Furthermore, meta tools also add another layer to the system, which can become a prime target for attackers looking to influence agent behavior. Recognizing and managing this trade-off is essential before adopting meta tooling into a team's AI infrastructure.

The attack surface meta tools introduce

Meta tools derive their value by concentrating on the most relevant tools and operations. The same concentration, however, can become a double-edged sword when attackers target this meta layer, as influencing it affects every downstream tool interaction that the agent has.

One class of risk comes from the tool metadata itself. Microsoft’s MCP security describes tool poisoning as a form of indirect prompt injection in which an attacker embeds malicious instructions within the tool descriptions or schemas. What makes these attacks especially dangerous is that these instructions are not visible to end users, but discovery meta tools reason over them as part of the tool selection.

Another class of risk comes from the orchestration layer. The confused deputy problem, as described in MCP security best practices, shows what happens when a single OAuth client mediates access to multiple downstream APIs and is compromised. In this model, even a minor misconfiguration, such as accepting a token issued for an incorrect audience, can escalate privileges across all routed calls.

The bottom line is that meta tools cannot be evaluated solely on their performance benefits. Because they control discovery and execution authority, they must be subjected to the same level of scrutiny as all the other components in an agentic system.

This becomes much easier when supported by an enterprise-grade orchestration platform such as Credal. Credal provides a centralized environment for creating multi-agent workflows and deploying AI across an organization. It helps ensure that the introduction of meta tools does not add unmanaged risk, which typically comes with an additional abstraction layer.