💡Savvy Enterprises are already using AI to get ahead of their competition: here’s how

March 24, 2025

To paraphrase Jane Austen:

“It is a truth universally acknowledged, that a CIO at a Fortune 1000 company, must be in want of a safe way to deploy AI at the enterprise.”

Disruption is coming for every industry, and even traditional firms like PWC are investing big money into preparing for the new AI spring. Executives everywhere are trying to balance the existential need to start applying AI to avoid getting left behind, with their regulatory obligations to do all this in a safe, secure and compliant manner.

One of the key challenges executives face is that it's still unclear what problems AI is best placed to solve. Since our launch in March 2023, Credal has already enabled fast moving enterprises with thousands of employees to safely execute tens of thousands of prompts, powering AI workflows from internal knowledge management, to data analysis and software engineering.

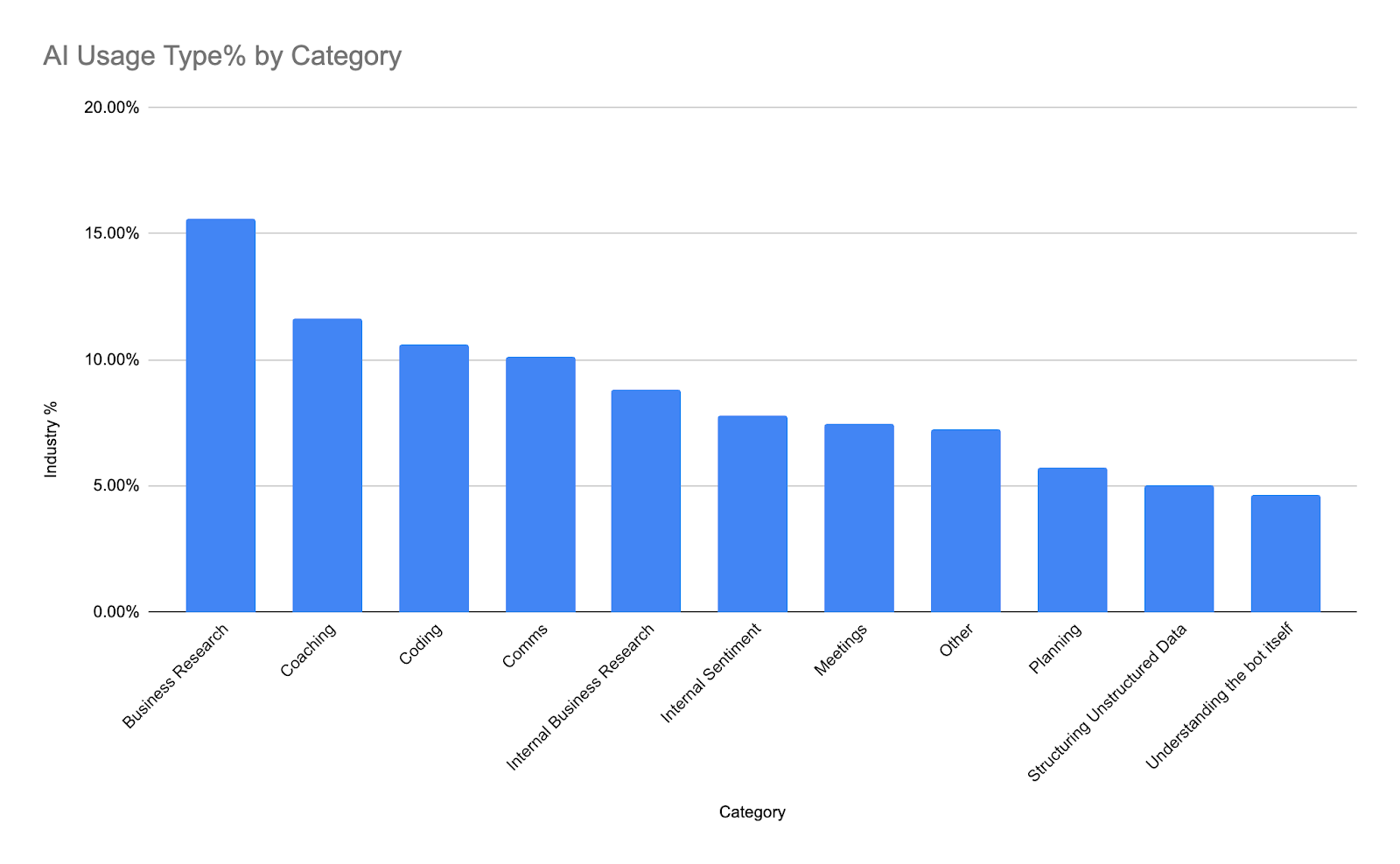

As enterprises venture into this new space, our customers often ask us how their use of AI stacks up against the industry at large. To help them out, we clustered our (anonymised and redacted) user prompts and tried to answer the question on everybody’s mind: how can AI safely deliver value to the enterprise today? Of course AI is moving insanely fast, so the number of use cases will only expand from the ones today. But enterprises who can get ahead of the curve now, will level up their employees faster and with the right foundational investments, they can stay ahead of the curve for the next generation of innovation.

So first things first: what does the data actually say?

Saving each employee up to 4 hours a week in Internal Business Knowledge Retrieval (17.3% of AI usage)

The #1 use case for AI at the enterprise is perhaps not so surprising: helping employees understand what’s happening. The types of prompts we’re talking about here (obviously these are not literally exact prompts our customers have written, but rather synthetically generated exemplars of the class of internal business knowledge retrieval questions):

- A sales manager asking, "How much revenue did we close last month in the Manufacturing vertical?"

- An engineer new to a project asking: “Who’s got the most commits recently on the “Generate Prompt Service”?

- A new employee trying to understand the org chart asking “Who’s John’s manager?”

- An employee trying to figure out the expense policies: “Am I allowed to expense a lunch with a candidate we are trying to recruit?”

Instead of looking up information across multiple different sources, a user can write a prompt in Slack and receive a straightforward answer - neatly aggregated and cited by AI. Although this is an obvious use case for AI in many ways, many enterprises are still struggling to launch this workflow in production, because so few services have emerged that enable IT and InfoSec to have full visibility and control over where their data is going.

For the early adopters using Credal, employees are citing up to 4 hours a week saved per active employee. This is a radical shift in employee productivity - half a day every week won back. One of the most exciting aspects of this from our customers’ perspectives is that their employees are learning how to use AI before the competition. This gives Credal customers a clear advantage in their core business, making their workplaces more desirable locations for top talent and setting them up to innovate faster as AI disrupts more and more industries and workflows!

Improving Collaboration with AI enhanced comms (15.62%)

AI is also facilitating more effective communication within the workplace. For example:

- An engineering manager asking, “Draft an email to my team explaining the plan for our OKR setting process. Make sure the email is engaging and calls out that everyone’s feedback is important.”

- An employee asking, “Edit this Slack message to sound less casual”

- A digital marketer, asking “Re-write this social media post to make it more engaging to X audience”

- A content writer, asking, “Write me an outline for a blog post describing the benefits of using AI”*

- A non-native language speaker asking, “Clean up this language to sound like it was written by a native speaker”

Writing, editing, massaging, and reworking communications is a huge percentage of employee time, especially at large organizations where voice, audience, and presentation can be critical. AI is helping employees iterate on their comms in seconds instead of minutes or hours. Instead of sweating over each individual word, an employee can simply provide high level direction and let the AI figure out the rest.

With Credal, we see an average of 3.3 prompts needed, before users have a ready-to-send version of their communications. I know I used to slog for hours over important emails to my senior stakeholders (which is honestly a small part of the reason I started this company…especially since my first investor update is now overdue 🙈 ).

But you don’t have to trust me. I chatted to Keith Peiris, the co-founder & CEO of Tome - a popular tool for building AI-powered presentations. He shared this insight with me:

“Most people open up a work artifact (e.g. presentation tool, document) with an idea in mind. That said, they often don't have the structure that will help their audience understand it. It turns out that the way to structure thought and ideas has been pretty constant over the past few decades even though the content has been different. One of the most compelling things about creating with LLMs is they give you a starting point to fill in vs. needing to form the story yourself. This turns making a presentation into a ‘fill in the blank’ exercise.” - Keith Peiris, Co-Founder & CEO at Tome

Once you have something, you can use LLM-like tools to perform artifact wide operations like sharpening, summarizing, and rewriting. You could imagine rewriting for different languages too.

Lastly, if you're in the middle of writing something yourself, asking an LLM to rephrase a sentence or two is a lot like riffing with a creative thought partner.

As with any other use case, data security is an essential consideration. Organizations must ensure that confidential conversations and sensitive information are protected and privacy measures must be in place throughout the process.

Retaining and Attracting Top Talent with Internal Sentiment Analysis (10.63% of AI usage)

A fascinating use case that we didn’t anticipate: managers and people leaders using AI to understand their employee sentiment. A few illustrative examples:

- A HR leader asking, “Summarise all the feedback from the marketing team in the latest employee engagement survey on the new marketing strategy”

- A Director or VP asking, “What is team culture like on each of the three teams under me? How much conflict is there on and between each team?”

- An executive asking, "How do you think the team would react if we changed our unlimited PTO policy to a set number of days?"

To answer each of these questions without AI, an employee would have to read and synthesize a vast amount of information: either from a 1000 person employee engagement survey, or perhaps from 6 very busy Slack channels! For AI, this kind of information synthesis is table stakes. For effective employee sentiment analysis, an enterprise needs the right data inputs, good vector search to identify the relevant information for any given question, and then extremely strong privacy controls to ensure that AI tools only check data that the questioner has access too, and that it masks any sensitive data before sending to the AI provider itself.

We’ve seen AI save days if not full weeks from HR teams just in analysing survey responses. Although AI should never be a replacement for talking to your people, it can be a fantastic aid in gathering and synthesising lots of information, and learning the right questions to ask.

Making existing data resources more valuable by structuring unstructured data (5.73%)

Employees are using AI to turn lengthy, unstructured text into concise, structured data:

- An analyst asking, “Read this PDF and structure the key data points into a table”

- A customer success manager asking, “Plot the trends and patterns of customer feedback on a graph over time”

- A data visualisation developer asking, “Remove points that are more than two standard deviations from the mean from this table”

A long document will often contain several individual data points that a user is trying to move into a structured format, and they’ll use chained prompts to get the structured data they need. For example, a user might say “find the revenue for this company in Q1 2022”. Then they’ll say “Great, do it again for Q2 2022.” Once the AI has pulled out the data, it's trivial to continue the prompt: “Now, put those data points into a table showing revenue for company X over time.” This is a simple 30 second recipe for what could easily be an hour or two's worth of work converting a long PDF into a digestible table.

One of the world experts in this is Will Manidis, the CEO of ScienceIO, who helps companies make sense of medical text using AI. I was curious to see Will’s take on AI’s role in this:

“If you look outside of high tech industries, from healthcare to construction to insurance, almost everything a business does either takes unstructed data as input, or generates it. Billions of hours of human labor are spent reading, writing, and making sense out of these forms. These industries have seen basically zero software penetration as a result. Tableau can’t offer you anything if your data lives in a fax. The thing everyone misses about large language models is their greatest impact will be making all this legacy data accessible to software tools. Think of universal ETL, for your entire business, without configuration. Insane productivity gains.” - Will Manidis, Co-Founder & CEO at ScienceIO

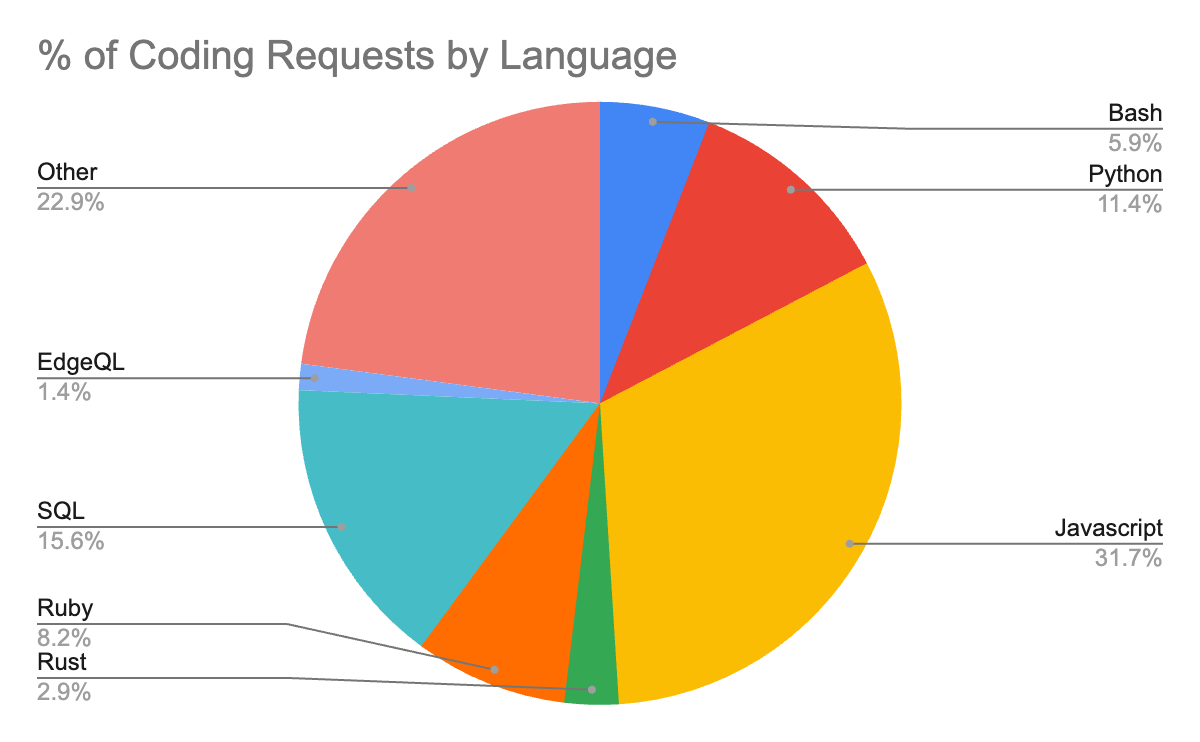

Levelling up every technical employee, from the Staff Engineer to the Data Analyst through Coding & Analysis (7.02%)

This one is particularly close to my heart, since it’s been something I’ve used AI for so much! Just recently, my cofounder Jack asked me to stand up a demo app to scope how the integration of Confluence would work. Having never worked with Confluence before, I turned to Credal first. After 6 prompts and almost no real thinking required, I had a fully working demo app in Node.js that could grab the relevant data we needed from Confluence, which Jack could then use as a guide to integrate into our main application. But that’s just us! What we’re seeing from our users:

- A support engineer armed with a user’s bug report asking, “When the user performs actions XYZ, they get the following error message. What are the likeliest root causes and what are some helpful debugging steps?”

- An analyst with rudimentary SQL knowledge asking, “Write me a SQL query to calculate the number of users that churned last week”

Of course, one of the biggest production use cases for AI today is Github Co-Pilot, so I had to talk to Ryan Salva, VP of Product at Github, who is responsible for Github’s Co-Pilot efforts. Ryan’s explained his vision for the evolution of AI in this way:

“Over the next 5 years, AI will go from its status today as an extraordinarily sophisticated autocomplete, to being infused into every part of the Software Development Lifecycle. As its capabilities expand we can also expect to see increasing importance for the conversational model - as AI covers the more uncertain parts of early software development where the Engineer seeks to understand exactly what work needs to be done, and tries to architect a solution before any actual code is written. Despite all this, it seems that humans will still be in the driving seat of AI for the foreseeable future. Solving the low hanging fruit can get us from 35% Co-Pilot acceptance rate today (itself a 2x improvement on traditional code autocomplete) to perhaps as high as 60%. But short of significant scientific advances in what AI can do, humans are still going to be needed to coach and guide the AI.” - Ryan Salva, VP of Product at Github

We’ve already seen some pretty incredible capabilities from AI in terms of its ability to reason about a vast array of coding languages, even ones that may not technically be in its training set!

As with other applications, privacy and IP are critical considerations. Ensuring that sensitive code or intellectual property does not end up in AI training datasets is a vital aspect of incorporating AI into the development process.

Defog.ai, for example, is pioneering a “privacy centric” approach to using AI to writecode that analyzes data. I chatted to Risabh, one of the Founders, who helped shed a little light on this from his perspective:

“AI Copilots for data analysis unleash enterprise productivity by eliminating the need to do manual boilerplate work. This is both an opportunity and a challenge for CIOs, who want to enable productivity but are concerned about sensitive data leaving their servers. Privacy friendly architectures that only use metadata (like SQL schemas) or can redact sensitive data (like customer names or financial information) are critical to get buy-in in the enterprise.” - Rishabh Srivastava, Co-Founder at Defog.ai

Meetings that don’t suck (7.41%)

AI is also making its presence felt in the realm of meetings, offering valuable support before, during, and after the meeting itself. Employees utilise AI in various meeting-related tasks, such as:

- A team lead, asking “Prepare some agenda points and discussion topics to serve as meeting collateral for our exec meeting next week”

- A sales rep, asking “What is the company line on how we compare to competitor X” during a sales call

- A operations lead, asking “Read this transcript and generate a meeting report with action items for follow up”

With these particular examples, we’ve seen mixed feelings about data security. For lower profile meetings amongst more junior employees, there seems to be a laissez-faire attitude about employees copy-pasting things into Chat GPT. But as soon as the meetings become higher level, such as strategic meetings or discussions of sensitive financial information, then staying in control of how that data is used and by whom is a massive challenge.

The Iron Man Suit for the Financial Analyst

Alongside engineering, marketing and HR, Finance teams are another early adopting segment of AI. Rather than listen to me sound off about AI in the finance world, let’s hear from people who really know what they’re talking about.

Alex Lee is the CEO of Truewind, who are pioneering the use of AI to help companies with bookkeeping and financial modeling. I asked Alex for his thoughts, and he helped break it down for me:

“Finance teams have long preached about the importance of having tools to empower businesses to make data-driven decisions, by providing real-time insights and actionable recommendations. The rapid advancements in AI are getting us closer and closer to that vision. But it's not solely a technology problem. CIOs should focus on deploying solutions that offer a balance between automation and human intervention. Ie if the financial analyst is supposed to focus on high-level strategic tasks, develop the tech to handle the tasks to equip the analyst with what they need. Lastly, it should go without saying, ensuring data privacy and security is a top priority.” - Alex Lee, CEO at Truewind

This made a lot of sense to me. AI by itself is not anywhere as powerful as AI coached by humans. For that reason, I personally am still a little bit skeptical of the short term future of the Auto-GPT maximalists: as far as I can see, most of the AI advancements that we see real, sticky business usage for, still involve humans in some form or another. No doubt the rapid changes in the capabilities of the AI may put that in question in the near term, but right now, getting AI operationalized still seems to need some level of human input.

An Executive Coach - but for every employee (2.23%)

New managers and junior employees in particular are using AI as a coaching tool, guiding themselves through complex interpersonal situations at work. Employees turn to AI for advice and recommendations in a variety of scenarios, such as:

- An employee during EOY reviews, asking “I received this critical feedback during my review cycle. What are some sensible steps I can take?”

- A manager asking, “I have to deliver some difficult feedback to a sensitive employee. It’s important that they hear the feedback, but it’s also important that I don’t offend them. What is an empathetic way to deliver this feedback?”

Helping users understand AI Itself! (1.67%)

Many users initially have questions about AI’s abilities. Ironically, AI is actually very bad at understanding itself today. Across all of the major foundation models, we saw consistent patterns of AI models getting basic questions about their own capabilities wrong! GPT4 would often say it was GPT3 unless explicitly told not to in the system prompt. Anthropic’s Claude would often also say it couldn’t do certain things it could. A lot of users on their first query would ask things like ‘are you alive’. But users pretty quickly get bored of these sorts of novelty questions, moving on to actual value creating work.

Bottom Line

The takeaway from all this should be simple: AI is here, AI is real, and the enterprises that learn to harness it today can get very real benefits across Engineering, Sales, HR, Finance and Ops - in short: every part of the business.

To paraphrase Jane Austen once again:

“A Large Language Model is the best recipe for happiness I ever heard of.”’

👋🏽 If you’re an enterprise searching for a secure way to deploy AI and level up your workforce, reach out to me at ravin@credal.ai, or feel free to book a time to chat!

None of this blog post was written by AI. However, 80% of the first draft was recommended for deletion.